Building a home Network Attached Storage server. Part 2: RAID Setup

This article was written in 2010. Things change over time. So the information presented here may no longer be current and up-to-date, or it may have even become factually inaccurate. There may be useful information here, but you should check recent sources before relying on this article.

This is Part 2 in the Building a home Network Attached Storage server series. In this article I will talk about some of the options for the RAID arrays and the art of RAID configuration.

Now that we know what hardware this system is going to be built on we have to decide what technology is going to run it all. We still have several options for RAID controllers and software.

One item that really needs to be mentioned is that if you use Windows computers to access the NAS you will want to use Windows Vista, 7 or Server 2008 as the NAS operating system. This is for one simple reason, Windows Vista in 2006 introduced Server Message Block 2.0. SMB2 is a massive boost to network file transfer speeds. Where an XP machine may only be able to send 50MB/s over the network, the same machine running SMB2 will be pushing 80MB/s. So if you are going to be using Windows on your desktop, you probably want to be using Windows on your NAS.

The first step is deciding what RAID controller you are going to use for the system.

RAID controller options

There are several options for RAID arrays on the NAS server, the most obvious, and the one I chose was RAID 5 using the on-board Intel ICH10R and Promise RAID controllers. Software RAID has the reputation of being even slower than fake-RAID. Though I can’t comment on it in this instance because I didn’t bother trying it and running benchmarks. Here is my take on the RAID controller options:

On-board Intel ICH10R and Promise RAID

This is the cheapest and highest performance option for my budget. As I explained in the first article, the on-board ICH10R controller is an implementation of fake-RAID, a mix of hardware and software. You have to configure the ICH10R to read the SATA drives as a RAID array. It will then only show those drives to the operating system as an ICH RAID disk. The OS needs to have a driver installed to interface with the ICH controller and see the RAID drive as an actual usable hard drive.

Using the ICH10R controller means that if you ever have to replace the motherboard, and don’t want to lose the array, you will have to replace it with another Intel ICHxR motherboard. Revisions if Intel’s ICH RAID are backwards compatible, but not forwards compatible. This means that you will have to use a motherboard with ICH10R or higher.

The promise controller is the same deal, but since I am using RAID 1 on that array I can literally take one of the RAID drives out and plug it into another computer and it will be recognized as regular SATA drive with a regular partition. Instantly available.

Windows Server software RAID

The Windows Server family of operating systems have a fairly nice software RAID controller. Not really fast, not really slow. Windows Server software RAID has the benefit of being hardware independent. It doesn’t matter what hardware you have to replace in the system. Just tell windows that those drives are in a RAID array and it will see them as such.

The obvious downside, and the problem that caused me to drop this option, you have to buy a copy of Windows Server. I don’t know if you’ve priced this software lately, but Windows Server 2008 is prohibitively expensive, like $700 expensive. There was no way I was going to spring that kind of cash for it.

There is Windows Home Server, which is much cheaper, but it is only available in a 32-bit version and has been neutered of most of the other beneficial Windows Server features.

Ubuntu software RAID

I am familiar with the Ubuntu operating system, so this was another one of the options I considered. Ubuntu also has support for Linux software RAID. It has all of the same benefits and drawbacks as Windows Server software RAID, with one major additional drawback that made me drop it as an option, it doesn’t have the Windows SMB2 system. On my Windows network SMB2 will make a night-and-day difference in network throughput.

OpenSolaris ZFS file system

The OpenSolaris ZFS is the most intriguing RAID option I’ve seen. It is a form of software RAID that is reportedly very fast, and very smart. After doing some reading on the ZFS file system and it’s native support and very smooth implementation for RAID, I was really interested. It’s been quite some time since I worked on a Solaris system so there would have been an extra learning curve, but in the end I dropped this option for the same reason as Ubuntu. It doesn’t have SMB2.

If you don’t plan on using Windows machines as the clients for the NAS, and don’t mind dealing with the quirks of Solaris, then this is my recommendation. ZFS is a very cool system and they are constantly improving it.

Buy a real hardware RAID controller

This wasn’t an option in my build, If you have the cash, this is the best option. Hardware RAID controllers are much faster, much smarter, and offer better data security. They read faster, write faster, rebuild faster, and you can (and should) attach an internal battery backup. But as I stated in Part 1, this simply was not in my budget.

RAID level options

In my server I chose to use RAID 1 on the system array (2 drives) and RAID 5 on the storage array (6 drives). In my opinion this was the most stable and cost effective solution. However, I can’t to an article about NAS servers without discussing RAID level 10.

RAID 1

Mirroring, RAID 1 is pairs of mirrored drives. If one drive fails the other drive in the mirror still has all of the data in tact. This is the solution for a pair of drives with redundancy. Which is why I chose this level for the pair of system drives. You can lose 1 drive in the mirror and still have all your data.

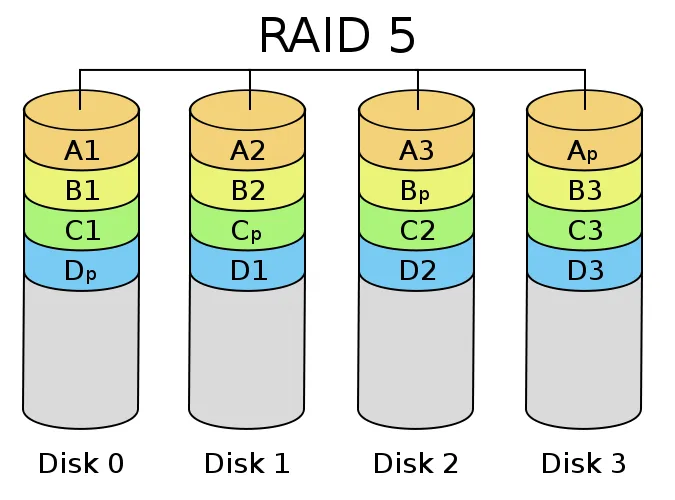

RAID 5

Striping + parity. In RAID level 5 you have a parity for every stripe. This is the storage solution with the best cost/benefit ratio (in my opinion). Basically you lose one drive worth of storage capacity to parity (total storage = n-1). So my six 2TB drive in a RAID 5 array will have 10TB of storage (approximately 17% capacity reduction for redundancy). You can lose one drive in a RAID 5 array and still have your data, 2 drive failures means catastrophic data loss. The downside of RAID 5 is that redundancy is based on parity, and parity calculations are hard. This makes RAID 5 writes much slower than the alternative.

RAID 10

Mirroring + striping. This is the fastest and most redundant solution for a storage array. The data is mirrored across the drives and then striped for speed. This is very fast and very reliable. In RAID 10 you can (theoretically) lose two drives and still have all of your data. Though if you lose two drives on the same side of the array it means catastrophic data loss. It is better than RAID 5 in almost every way. The one major drawback, you lose 50% of your data storage to redundancy. So my six 2TB drives would only yield 6TB of storage.

The RAID 5 vs RAID 10 argument

There is a growing voice in the data storage community proclaiming that RAID 5 is a terrible solution. There are many valid points to these argument, most of which revolve around performance when in degraded mode, which isn’t a big concern for a simple archive and storage array. The other big complaint is the increase in the chance of catastrophic failure when compared RAID 1 or RAID 10.

-

RAID5 is slow (writing)

Little to no impact in a data-storage array. 90%+ of your activity will be reading data, not writing. Even “slow” RAID 5 write speeds are enough to saturate a gigabit network.

-

RAID5 can only support one drive failure

One is better than none, granted not as good as two, but massive increase is storage lost to redundancy is a hefty price to pay.

-

RAID5 takes a long time to rebuild

This is when your array is vulnerable. A drive has failed, your are operating in degraded mode, you plug in a replacement drive and the array starts rebuilding (recalculating parity and writing the data to the new drive). If another drive fails during this period it means catastrophic data loss. The probability of this happening depends on the size of the array, the size of the drives in the array, the quality and age of the drives, and the speed of the controller. Generally speaking it has a very low chance of happening.

-

RAID5 arrays can be corrupted by a fatal read error when the array is operating in a degraded state.

This is the big one. Sometimes, vary rarely, hard drives simply cannot read the data for a block. The drive will politely say “I’m sorry, but this block is gone.” This is a very rare occurrence indeed, you’ve probably never seen it in your lifetime. But if a tiny little error like this happens when the array is trying to rebuild the controller will not be able to calculate parity and it will fail the array. Total data loss. The chance of this happening depends on how big your array is. The larger the array, the larger the chance of having a read error during a rebuild.

So yes, RAID 10 is certainly better for data security, but you pay for the increased security by dramatically decreasing the usable space in your array. Big hard drives, or big storage solutions are expensive. In my case I couldn’t justify the cost, 194% increase in the space required for redundancy for a ~2% decrease in chance of failure.

This is a very personal decision. If you have the money, or don’t need that much space, and truly value your data I say go with RAID 10. For me personally, I need every terabyte I can squeeze out of my system. So I went with RAID 5 for the storage array.

ICH10R RAID5 configuration

There are two configuration options that you need to set when building a RAID 5 array, stripe size, and cluster size.

- Stripe size is the size of the RAID stripes that will be spread across the drives in the array. This value is set with you first setup the array in the RAID BIOS.

- Cluster size is the size of the clusters in the partition. This value is set when you format the drive in your operating system.

I did lots of reading, trying to figure out what the optimal stripe and cluster sizes. There is lots of math and science involved in determining these values, and to be honest I didn’t really understand it. I have decided that this is a mix of black magic, voodoo, and a little witchcraft. Don’t bother trying to scientifically compute the correct values of your RAID settings, you will inevitably get it wrong. Wasting days of initialization time in the process.

Instead, build the smallest RAID partition you can and run benchmarks. I used the ATTO Disk Benchmark tool because it gave me the most consistent readings. The procedure is simple, but time consuming:

- Build the smallest RAID partition you can in the controller, using all of the drives you will be using in the final array.

- Let the Intel Matrix software initialize the array. This will take a long time, depending on the size of the array, that’s why you want to make the smallest array possible.

- Format the new little array with various cluster sizes. Just do a quick format, this is nearly instant. Run your benchmarks here. Then reformat with a different cluster size. (Write down the slowest and fastest read and write speeds)

- Repeat the formatting process until you find the fastest cluster size for this stripe size.

- Go back to the RAID BIOS, delete the array and build a new little array with another stripe size. Start from step 1 again.

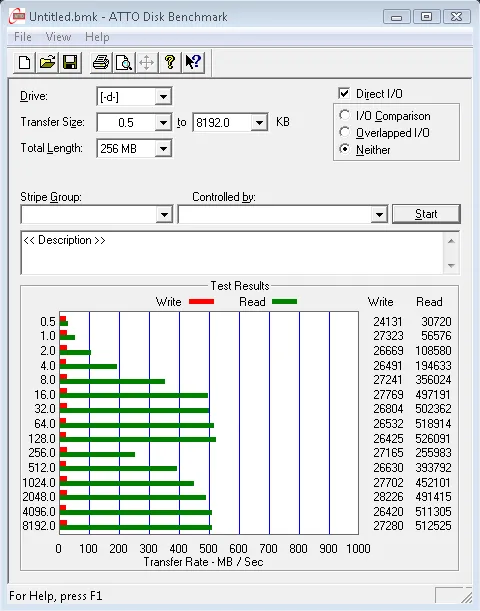

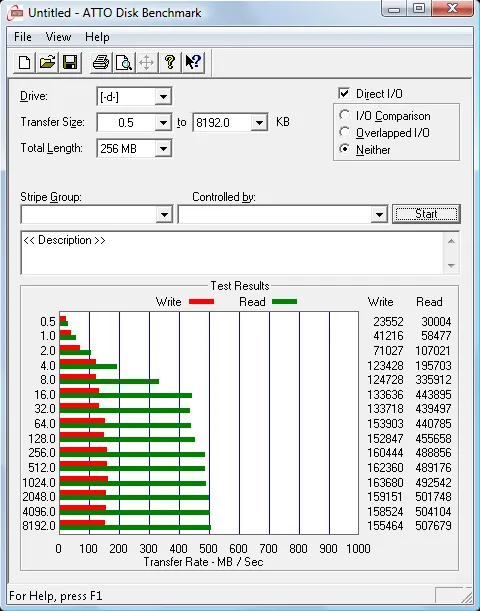

This takes a while, but it is the only way to find the best settings for your array. The difference between poor settings and perfect settings are amazing. I went from 20MB/s writes with 128k stripes and 64k clusters to over 80MB/s with 64k stripes and 32k clusters. Which I found to be the optimal values for my particular array.

| 128k Stripes / 64k Clusters | 64k Stripes / 32k Clusters |

|---|---|

|  |

Indeed, these numbers speak for themselves. Take the time to find the optimal settings for your RAID array.

Write caching

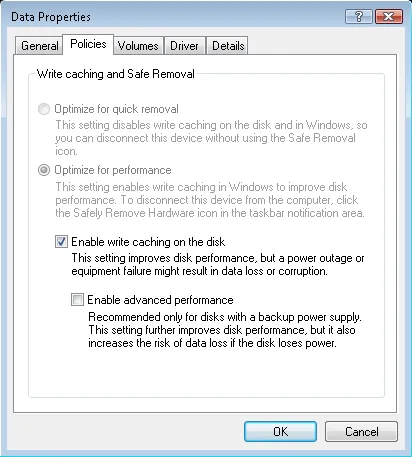

RAID 5 has notoriously slow write speeds, the way we make up for these slow writes is write caching. Write caching is when we store the data to be written into memory and let the RAID controller write it from memory to the array as fast as possible. There is however an added danger with write caching, if the power were to fail, or the system were to crash, the data would not be written. In a worst case scenario this can actually cause the RAID array to become corrupted. This is why it is important to always have a battery backup on a RAID system.

There are three levels of write caching available in Windows. The first is in the Intel Matrix Controller software, Volume Write-Back Caching. This is the single most effective write caching you have and it is extremely effective. Always keep Write-Back Cache enabled.

The second basic OS write caching. In Windows you’ll find this in the device properties for the drive in the Device Manager. This setting is another level of write caching in the OS, it is relatively safe so I recommend using this basic write cache as well.

The third is the “Enable advanced performance” under basic caching. This pushes caching even further, these lazy writes are frankly dangerous and have little to no benefit in my testing. I recommend leaving this option disabled.

Continued in Part 3: Wrapping up and product reviews.

Comments

An interesting read so far. I’m looking into setting up a smaller NAS of my own, and this is some good info. I look forward to reading part 3 when you get to it.

Hey bud, I’m flat out EXHAUSTED. I’ve been going at this project for days on end.. last night I was up till 9am.. firstly, the antec 1200, which was a GREAT suggestion I took of yours. doesn’t come with a basic speaker! So, when I wasn’t getting video on my 46″ LCD Sony using..

Gigabyte X58 + i7

ATI 5770

vs.

Gigabyte GA45 + Q9550

ATI 5770

It sent me in to a troubleshooting meltdown. First, I swapped out the vid card with my old system and tested it – worked. So I though, either the CM600 silent PSU was under powered, the CPU (Frys open box, last one) was bad, the MB was bad.. NO BEEPS to work with. Since the MB powered up and lights came on, assumed it wasn’t the MB (for now).. finally swapped monitors (obviously every consumer has to have 2 monitors sitting around to see if gigabyte changes their BIOS control for the LCD monitor.. and in this case, by LCD, I mean lowest common denominator; as in, WHY FUCK with 640×480??? So, after troubleshooting for hours, I finally realized gigabyte did something stupid to the BIOS for inexplicable cause and to my detriment. Fine.. but, that doesn’t explain… the second problem! Joy. The computer will power on for say, 90 seconds, then stay on for 50, then 15, than 5, then nothing. WITH VIDEO. I’ve reset the firmware (repeatedly).. The only thing I could deduce from such linear behavior was that there was a heat build-up, and wouldn’t you know it?? Fry’s had sold me a used CPU where the thermal grease connection was inadequate. New CPU cooler, the Mugen-2 Rev.B; its the coldest running fastest cheapest combo. I analyzed that for over an hour last night..

Anyway, system booting, 7 installed, and started experimenting with RAID configs.. but I only had available to me ‘stripe size’ … then I realized that if I go to manage within Win, I can config the drive there for RAID.. however, I don’t think it’ll do RAID 5 unless you have 4 drives, right? And you’re using the disk manager under the “manage” option of “My computer”… do I understand it correctly?

So thats where I’m at… My system tho, I have to say, is SUPREMELY quiet for what its doing.I do need your advice though – I need to find the SMALLEST possible LCD or TFT, whatever, display to mount near this thing since the BIOS wont sync with my 46”, and because the smaller the monitor, the smaller the battery drain on the UPS. Also, this is going in a media center context, so I want it to be unobtrusive; the TV is the focal point.. and two identical images running is annoying. Also, the second tiny display will be a nice way to just manage a playlist, library, whatnot.

For backing your stuff up, I would use (and I’ll give you details of with a youtube vid if you like after I do it) acronis, and segregate your RAID by topic! Or security level. For instance, I’m going to make 3 partitions; one for software I have on a network share… one for media, and a 3rd for my personal files. The beauty of this is that I can create policies per HDD of users, instead of folders.. and though I’m not at the stage of being fully up to date on administering access of read/write but not alter or delete for 2 entire volumes, and NO access for the volume for my back ups (recovery for both of my systems and my lifelong aggregate of personal files) … My naming convention will keep user rights clean and obvious I think, and with such a segregation, in your case, you could just… And though you think that the 12TB of space you have now is beyond what you could conveniently and cost effective to in 2TB increments.. I have supreme confidence that 3TB drives are 7months out, and 4 tb drives are not more than 14mths.. and if you can’t fit what’s critical to you in 8TB, and if RAID plus offline backup are not secure enough.. well, it just isn’t “budget” sized anymore. This stretches that term.

use one of those and pick which topics are of importance to you.. Fault tolerant is probably an acceptable level for some percentage of files you have, whereas double coverage is better for you in others. And if that’s not enough, you could do occasional Mozy back ups.

Looking forward to your reply.. I’m actually racing against a deadline of a product that I can’t return that my friends got me that requires this system to be able to TEST if it works before the return policy ends! Ha.. that an taxes tomorrow. JOY!

Goodnight man.. and I’d like to see pictures, inside, bothsides of inside.. I’ll do the same, but mine is certainly not a work of art in terms of tidiness.

Talk to ya soon..

t

I just proofed that.. and fell asleep a few times while proofing it. I’m SO tired right now I cant remember athought long enough to complete the sentence. Please excuse the pigeon… I’m just really hopeful I’ll have a reply from you when I wake up.

thanks again.

also, have you found a decent CPU temp app/GPU temp app?

I’m going to be ripping TONS of media for about a month straight… I’m firing Dish but I want my archived DVR’s and I have a device that’ll let me do that.. but ripping editing then encoding video is get everything HOT.

“Expandable base that I can add storage to when it become necessary” — Do you mean expand the size of the RAID container by adding drives? If that’s EVER crossed your mind, I have a SPECTACULAR read for you: http://tinyurl.com/y5pqhod

Are you using RDC on this computer? If so, what method? Windows native RDC, or 3rd party?

More thoughts… have you done any tests with Write Caching enabled?

Clearly, your goals are primarily for local and small use-groups (2-3 simultaneous connections, tops I assume), but there may be some information on this thread you find relevant and consistent with experiences: http://tinyurl.com/y6cw5e9

Finally, you said “good hard drive cooling” .. are you using anything beyond the included fan? I’m assuming not, and neither am I.

I have looked absolutely everywhere I can think of; how do you control the cluster size??

DAMMIT!!!! I didn’t experiment with cluster size because I forgot it was part of the FORMATTING process! not the RAID building itself. I feel like an idiot; I’m on day three right now with about 15% left to go for the 3TB volume. :-(

I looked at the page you provided for coretemp, but ultimately its not a gadget, so I actively have to seek out that information, as opposed to it constantly, passively being reported. Maybe there’s a way within ‘options’ to create an event, which then could cascade in to an emailed alert.. but the gadget for me is my preference.. perhaps someone will make a coretemp gadget that has emailed alerts within it!? One can hope. There appears to be a program that monitors multiple cores of a processor AND video aggregated in one gadget, however, the reviews suggest its not free. MSI afterburner seems decent.

Nice advice on the monitor – USB! I wouldn’t have thought of it.

Okay – for the RAID situation.. I’m going to HAVE to send you a screen shot. First off, the options in the Intel RAID mgr that is loaded immediately after post gave no options different than those available within windows. Moreover, But in stark contrast to what you’ve said, I can use a 3-drive array for RAID-5. I’m researched this, because I don’t like contradicting someone without being certain.. under the row of “3 or 4 drive RAID 5” .. scan over and you’ll see ICH10R. I can understand a configuration that doesn’t relate to your project not being something you memorized. :-)

http://www.intel.com/support/chipsets/imsm/sb/CS-022304.htm

I’m really looking forward to your pictures! I actually ordered some short sata cables that are 6″ to declutter my case. I’d really like to see where you placed everything and how you organized it.

Finally!!! For sharing.. since I you have a monstrous capacity, I presume you have had to come up with something that NAS would have made more simplified; user-rights and accounts. My plan is to create user accounts but hide them. Access to the folders I want to provide it for, permissions invoked, but no login option for the accounts. Fracturing the RAID volume in to partitions commits me to the arrangement, whereas with folders I can use the capacity more efficiently.

I presume you’re aware that the more data you store on this array, the slower it will become; linearly. And the bigger the volume is, the slower it can get. That, I presume, is basis for smaller arrays… food for thought.

Let me know if you’d like to see pictures of my final setup: Interior and Desktop, but you first. lol. I want to see how embarrassed I should be with my diligence.

Thanks for the reply – and yes, I know my writing was a disaster!

Truman

Whats your opinion on Quick Formatting if I’ve chosen the wrong cluster size?

Thanks for the quick and thorough reply. The “rotational density” is quite a good concept, and has reformed my view; thanks. And, yes, I agree that more drives [should] mean better performance, however, when i was looking at the add-on arrays like QNAP etc., they didn’t seem to scale in such a compelling matter. In the end, the cost benefit of it seemed questionable. Like many things in this realm, the synthetic (in vitro) theories of how something could perform rarely equate to real-life (en vivo) throughput. I have an SSD in the notebook Im replying to you on, and its performance wasn’t as noticeable as people postulate it [should] be. But, on the bright side, in having experimented with the IMM software it seeeemed like there was a possibility to migrate the array size — and on intel’s site, I believe I saw something about it as well. Whether it really works or not – takes a year to do, or causes data corruption in the process are all concerns to discover also.

So far, Ive been quite conscientious about what I’ve done with my system since I’ve either been transferring large data-drives to it, formatting, or as of today, doing a data recovery project. All of these operations are multi-day-processes.. so risking a reboot/crash from anything has shied me away from more CPU intensive activities.. but I do want to see what this 920 can do in comparison to my Q9550. My immediate-to-follow project is going to be transferring from my DVR+750GB drive all my otherwise-locked-down-to-DishTV media that I’ve collected over the years. in fact, the reason I started on this ordained machine was to have a device powerful enough to encode H.264 streamed to it, capacious enough to store it all, and reliable enough to never lose it. The Hauppauge HD PVR is supposed to be able to extract.. or probably more accurately, siphon off the otherwise-HDCP media. I do wish it were a nicer looking device, did 1080p instead of 1080i, and had ANYTHING faster than USB 2.0, but, if all it needs is 2.0 thats fine, and if it will do 720p I’ll be elated. Also, once I strip off the commercials I’m going to have a FANTASTIC library. In fact, I’ll send you a picture of my entertainment center when I’m done with this project and the ugly hauppauge and what it’s wiring has done to the otherwise clean setup.. I also have a 400 bluray player that acquires from internet DB all the title info.

I posted a link to my CPU cooler. I guess I should also have posted a link to it’s performance. Its about the awesome-est, best buy for a CPU cooler there is, and my machine with it is literall silent. The Mugen-2 Rev.B … trust me.. it’s nice. Huge heatsink and ginormous fan. Its actually just.. neurosis that I even bother with a CPU monitor.. or geekdom.

I hope I didn’t seem condescending or arrogant in my last email. I apologize if I was. :)

Last night, after my formatting was complete and I finally wasn’t afraid to touch my system I installed some 6″ sata cables. I have to say, they are PERFECT. In fact, I need to buy one more cable thats 4″ shorter than the one I’m using for my boot drive and the inside of my case will be pretty much as clean as I can see making it without an engineering degree. Definitely pick up a set of those! Cheap cheap on ebay.

I also installed a wireless card.. I’m using 1 routher for my server to connect to the internet, and another for LAN. Kinda bummed with the card. Its an N card, however, with the advantage of an external antenna and being closer than my laptop to the source, it has basically no signal. I’m wondering if being near the power supply, or all the AC cables I have passing near the back of the machine is somehow interfering with reception. I’m going to buy another N card and isolate it.. I bought the card from newegg and when I received it it said it was from “RMA” .. I couldn’t decide if they’d sent me a broken one from the beginning or if someone just had really poor awareness of the abbrev. of their company name and the industry phrase for broken shit. Everyone else said the card was good… so maybe I did get an RMA’s product. Or RMA is a drop shipper of neweggs… who cares right? Have you seen sata docks? They hold any sata device…?

As this project comes to a close I feel some boredom creeping in. It was fun analyzing everything.

Have a good weekend Steve.

What do you use as software to control your fan speeds? I think the gigabyte software doesn’t really do what I tell it to. Also, my CPU hovers at 35° celcius with this cooler.. When I start encoding video, I’ll take a screen shot of the histogram showing how many cores are utilized to what degree simultaneous to the software showing the temperature and RPMs. Mind you, this fan is truly silent at 10k. Whats NICE is, I can upgrade the size of my array by swapping out the drives 1 by 1, no brains involved. Or change them for faster drives, such as the Barracuda XT, which has insane rates. Did you see the engadget article today? On 10 2TB drives tested…

http://hothardware.com/Articles/Definitive-2TB-Hard-Drive-Roundup/?page=10

No, a SATA dock.. as in, eSATA. It plugs in to one of your motherboard’s available SATA slots, and you get the standard 3GB/s transfer rate. Of course, you’re limited by 2TB increments, but perhaps you organize your data as I do; Software, Back-Up, Media, etc – and could save your arrays cumulative information in these same logical groupings, by category that is. That said, I’d think having more than 2TB for each of these categories would be atypical.. but even then could be addressed.

Yes, it [should] be wired.. but, I use external drives to move large amounts of data back and forth, but for nabbing files as I need them – wireless is fine for now. It defeats the purpose of having a notebook to tie it down – and if I weren’t going to use a notebook, 2TBs of space would negate the value of my server.

I wasn’t aware fan speed could be controlled physically! Will fiddle with this evening!! :-)

Did you check out the CPU fan I mentioned? What were your thoughts..?

Do you have a proprietary DVR (as in, one you can’t save the media from) ..?

Typo.. my reference to 2GB should be 2TB.

You still going to post those pictures?

clearly image codes don’t work here.. was gonna try to make it easy for you.. here are the links.

http://i74.photobucket.com/albums/i258/trumanhw/Antec_Server/PPL_9870.jpg

http://i74.photobucket.com/albums/i258/trumanhw/Antec_Server/PPL_9871.jpg

http://i74.photobucket.com/albums/i258/trumanhw/Antec_Server/PPL_9872.jpg

http://i74.photobucket.com/albums/i258/trumanhw/Antec_Server/PPL_9873.jpg

http://i74.photobucket.com/albums/i258/trumanhw/Antec_Server/PPL_9874.jpg

http://i74.photobucket.com/albums/i258/trumanhw/Antec_Server/PPL_9876.jpg

In the mix.. you’ll see (not to imply you care..)

Pic1: The Server – obviously, with BD-RW and removable HDD.

Pic2: Close up pics of 4″ cables to 3 SATA drives and 10″ cable to OS HDD in cady.

Pic3: Close up pics of 4″ cables to 3 SATA drives

Pic4: The back of the eSATA dock

Pic5: The front of eSATA dock

Pic6: From top to bottom per column:

– Hauppage HD PVR (extract movies from DVR – DishTV in this case)

– VHS –> DVD burner (convenience.. pretty much done with it)

– Pioneer Elite VSX-94THX receiver**

– 750GB Ext drive for DishTV

– DishTV DVR. Its the best out right now…

– Sony 400 Blu-Ray disc player — downloads all DVD/audio titles from internet.

Center device is backup power supply for computer (nothing else attached). Tested to 13 minutes reserve.

Receiver being repaired and replaced. Weak iPod management, poor network/DLNA support, and had a weak HDCP board that burnt out all HDMI ports during haphazard trial and error to discover that my ATI 5770 [might] be putting out a hot signal. Replacing with Onkyo TX-NR807 — has Audyssy (compresses dynamic range so that the volume used to hear dialog doesn’t result in annoying your neighbors during action scenes in movies.

Hope this isn’t hijacking of your page.. would like to see your choices.

Regards

PS – yes, I’m going to clean up the rats nest of a cable disaster after I get my new receiver. lol

Please ignore the studio light in the bg.

Yeah, heatsink IS massive.. but that was my point exactly. Keep it cold, eliminate CPU errors. As much as I hate the installation of that behemoth, I really trust it.

The lights on the twelve are a bit annoying.. though, I can disable them. I’ve just been lazy about it. some people use lights/windows in their case as… status amongst their friends. I like elegent/streamlined things.. its tough though, as mfg have a compatible agenda with the people I just mentioned in that it promotes their product. Se la vie.

Looking forward to your pictures.. will they be in a new article when you do it? PS… not to assume your photog knowledge, but, make sure you flood your server with light to get best possible picture quality. :)

Looks great steve. But truly.. I really recommend getting 3x 4″ SATA cables and 3x 6″ for your array.. are you out of sata ports? I didn’t realize but we have different motherboards; I’m on the X58.. does yours have additional SATA slots? And, as far as your 750’s, they’re, … IDE? What about ROM drive? What are you using for that? I’ve discovered (and prefer) booting win7 from USB pen drive.. What do you do with 1.5TB of an OS drive?? Do you use windows backup for your OS drive?

I’ve discovered a problem with using soft-RAID that’s a real bummer. If my system crashes, it’ll lose a drive and have to rebuild, for days. So any time I tinker with things, if it results in a crash, data integrity is at stake. I have a backup power unit, but the RAID needs one in the event of a system crash, not just in the event of a power outage. I really see no way in fixing this except getting a card with BBU. Even that however, I think may not work, as even with a BBU the drives will lose power if the system crashes.. This will be my next major purchase..